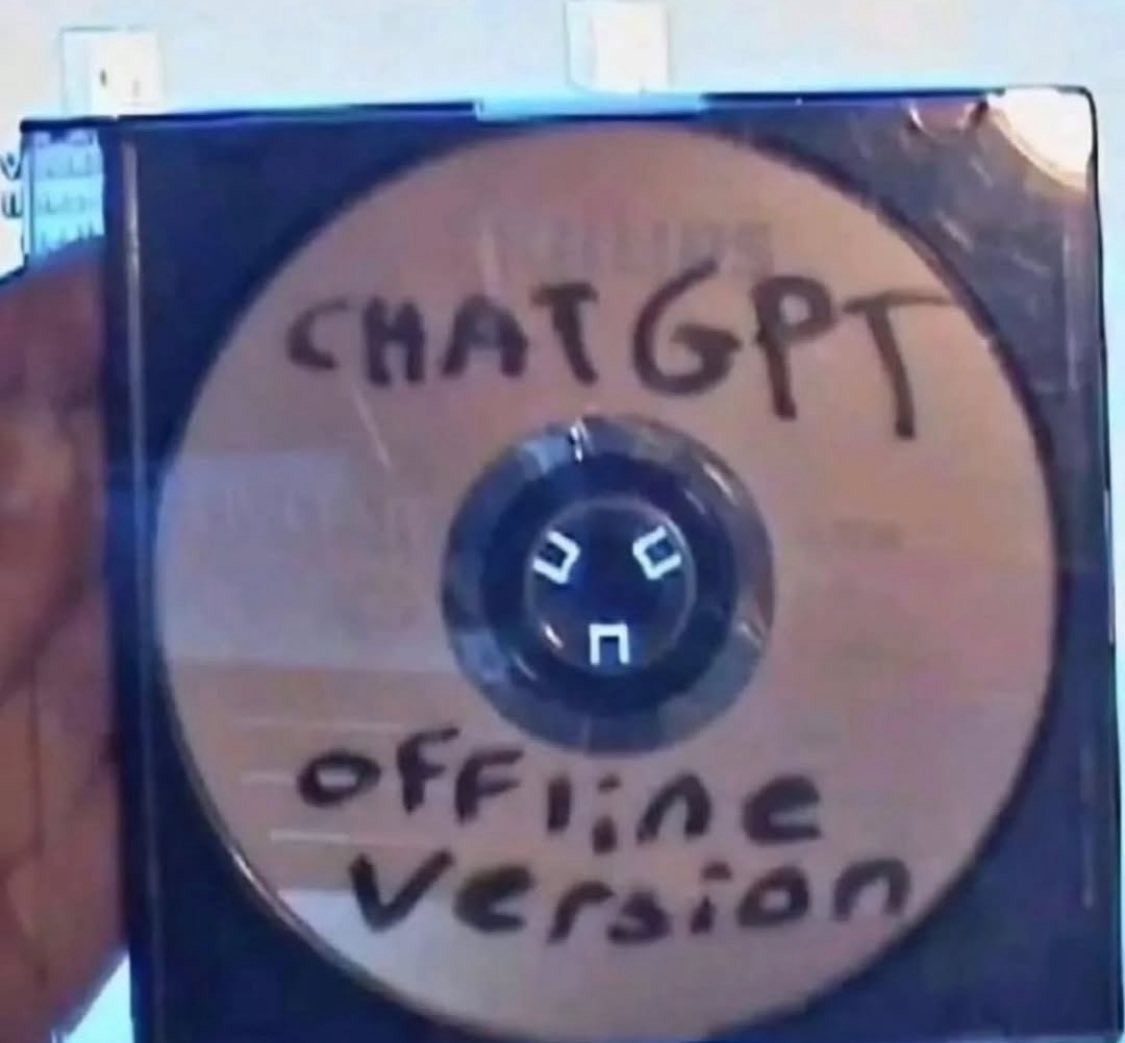

You can get offline versions of LLMs.

Lemmy Shitpost

Welcome to Lemmy Shitpost. Here you can shitpost to your hearts content.

Anything and everything goes. Memes, Jokes, Vents and Banter. Though we still have to comply with lemmy.world instance rules. So behave!

Rules:

1. Be Respectful

Refrain from using harmful language pertaining to a protected characteristic: e.g. race, gender, sexuality, disability or religion.

Refrain from being argumentative when responding or commenting to posts/replies. Personal attacks are not welcome here.

...

2. No Illegal Content

Content that violates the law. Any post/comment found to be in breach of common law will be removed and given to the authorities if required.

That means:

-No promoting violence/threats against any individuals

-No CSA content or Revenge Porn

-No sharing private/personal information (Doxxing)

...

3. No Spam

Posting the same post, no matter the intent is against the rules.

-If you have posted content, please refrain from re-posting said content within this community.

-Do not spam posts with intent to harass, annoy, bully, advertise, scam or harm this community.

-No posting Scams/Advertisements/Phishing Links/IP Grabbers

-No Bots, Bots will be banned from the community.

...

4. No Porn/Explicit

Content

-Do not post explicit content. Lemmy.World is not the instance for NSFW content.

-Do not post Gore or Shock Content.

...

5. No Enciting Harassment,

Brigading, Doxxing or Witch Hunts

-Do not Brigade other Communities

-No calls to action against other communities/users within Lemmy or outside of Lemmy.

-No Witch Hunts against users/communities.

-No content that harasses members within or outside of the community.

...

6. NSFW should be behind NSFW tags.

-Content that is NSFW should be behind NSFW tags.

-Content that might be distressing should be kept behind NSFW tags.

...

If you see content that is a breach of the rules, please flag and report the comment and a moderator will take action where they can.

Also check out:

Partnered Communities:

1.Memes

10.LinuxMemes (Linux themed memes)

Reach out to

All communities included on the sidebar are to be made in compliance with the instance rules. Striker

And gpt-oss is an offline version of chatgpt

I've been toying with Qwen3.

On my steam deck.

8 bil param model runs stably.

Its's opensource too!

Alpaca is a neat little flatpak that containerizes everything and makes running local models so easy that I can literally do it without a mouse or keyboard.

I mean, most people have a local LLM in their pocket right now.

Unless I am missing something:

Most people do not have a local LLM in their pocket right now.

Most people have a client app that talks to a remote LLM, which 'lives' in an ecologically and economically dubious mega-datacenter, in their pocket right now.

Plenty of the AI functions on phones are on-device. I know the iPhone is capable of several text-based processing (summarizing, translating) offline, and they have an API for third party developers to use on-device models. And the Pixels have Gemini Nano on-device for certain offline functions.

My phone does speech-to-text flawlessly offline, it's a crazy useful little LLM tool

Oh!

Well, I didn't know that.

I'm too poor to be able to afford such fancy phones.

Gemini nano, Apple Intelligence On-device, etc.

FCKGW-RHQQ2-YXRKT-8TG6W-2B7Q8

CrAcKeD

make sure to disconnect the internet first

It's just audio of French farting cats.

Le pfffft.

My bet was on porn.

Or a copy of an old Encarta cd-rom

If we assume a CD, you can probably fit a 256M parameters model in it. But it will LOAD.

DVDs exist. They can fit approx. 7B params, enough to be somewhat productive.

Could you crunch an LLM into 700Mb that was still functional? Cause this looks like a fun thing to actually do as a joke.

Edit, I bet I could get https://huggingface.co/distilbert/distilgpt2 to run off a CD. How many tps am I gonna get guys 🤣

Qwen3-0.6B is about 400 MB at Q4 and is surprisingly coherent for what it is.

That's so crazy that an LLM capable of doing anything at all can be that small! That's leaves room for like an entire .avi episode of family guy at dvd resolution on there, which is the natural choice for the remaining space of course

a 4k episode of family guy using H265 (HEVC) and assuming not too many cutaway gags could produce a file about 240MB. You could probably fit a 480i episode of south park in the remaining 60MB

Wow, just popped it onto my very slow desktop and this little model rips haha. I really think tiny LLMs with a good LoRA on top are going to be a huge deal going forward

there's also tinyllama, which is somewhere around 600MB. it's hilariously inept. it's like someone jpeg-compressed a robot.

also you're only gonna load off of that cd once so it'll perform fine.

That’s just Dr Sbaitso.

Isn't it possible to download all of wikipedia, and it being surprisenly a small file size? Can it fit on a CD?

It could fit on a BDXL disc.

You can fit text-only wikipedia on a normal Blu Ray as it's only about 24GB. You can also easily fit Llama 3.1 or any of the other open, offline capable ai models as they're only about 4GB.

could also store it on a flashdrive or micro sd card

No

(English) 24,05GB without media. Adding media adds 428,36TB.

Can you give me the text only version link? I found only a version that is like 43gb

The sizes I mentioned are from around 2023-2024, from https://en.wikipedia.org/wiki/Wikipedia:Size_of_Wikipedia

https://dumps.wikimedia.org/enwiki/ (https://en.wikipedia.org/wiki/Wikipedia:Database_download)

I suggest the happy medium called Kiwix, directly from the programme you can download all of Wikipedia with medium-sized pictures for a hundred gigabytes or so.

500TB is still surprisingly reasonable for what is essentially a library of human (surface level) knowledge.

It would be interesting to know how large the file would be including all text form references (i'd imagine anything else such as videos would completely blow the proportions)

No, you really can't; It's like 43 gb the text only version

So gonna need like 2 CDs then

yes you really can; it's like 20-25 gb depending on how recent of a copy you have. I've been seeding wikipedia for almost a year and it barely takes any space on my computer

The full 2025-04 English-only ZIM dump is about 120 GB. That includes reduced-size images as well as all articles. I think the text-only version is in the 40-60 GB range.

There are smaller ZIM versions in the ~4 GB range that would fit on a DVD, but they're only a subset for specific topics or for a list of the most popular topics.

kiwix? that's compressed (afaik), and when i tried, it took up half of my disk space and needed ethernet

It reminds me of the Britannica Encyclopedia on CD.

Encarta 95

Offline LLMs exist but tend to have a few terabytes of base data just to get started (e.g. before LORAs)

I thought it was more like 10-20GB to start out with a usable (but somewhat stupid) model.

Are you confusing the size of the dataset with the size of the model?

Maybe they meant GTA?